R-CNN is slow because it performs a ConvNet forward pass for each object proposal, without sharing computation. Detection with VGG16 takes 47s / image (on a GPU). At test-time, features are extracted from each object proposal in each test image. These features require hundreds of gigabytes of storage.ģ. With very deep networks, such as VGG16, this process takes 2.5 GPU-days for the 5k images of the VOC07 trainval set. For SVM and bounding-box regressor training, features are extracted from each object proposal in each image and written to disk. In the third training stage, bounding-box regressors are learned.Ģ.

These SVMs act as object detectors, replacing the softmax classifier learnt by fine-tuning. R-CNN first fine-tunes a ConvNet on object proposals using log loss. The Region-based Convolutional Network method (R-CNN) achieves excellent object detection accuracy by using a deep ConvNet to classify object proposals. At runtime, the detection network processes images in 0.3s (excluding object proposal time) while achieving top accuracy on PASCAL VOC 2012 with a mAP of 66% (vs. The resulting method can train a very deep detection network (VGG16 ) 9× faster than R-CNN and 3× faster than SPPnet. We propose a single-stage training algorithm that jointly learns to classify object proposals and refine their spatial locations. In this paper, we streamline the training process for state-of-the-art ConvNet-based object detectors. Solutions to these problems often compromise speed, accuracy, or simplicity. Second, these candidates provide only rough localization that must be refined to achieve precise localization. First, numerous candidate object locations (often called “proposals”) must be processed. Due to this complexity, current approaches (e.g., ) train models in multi-stage pipelines that are slow and inelegant. Compared to image classification, object detection is a more challenging task that requires more complex methods to solve. Recently, deep ConvNets have significantly improved image classification and object detection accuracy.

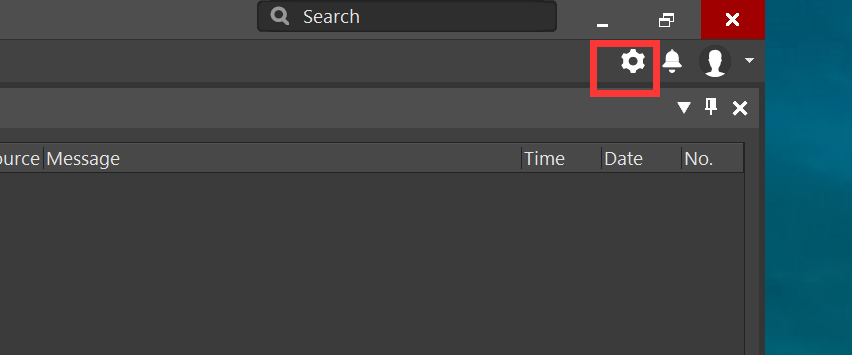

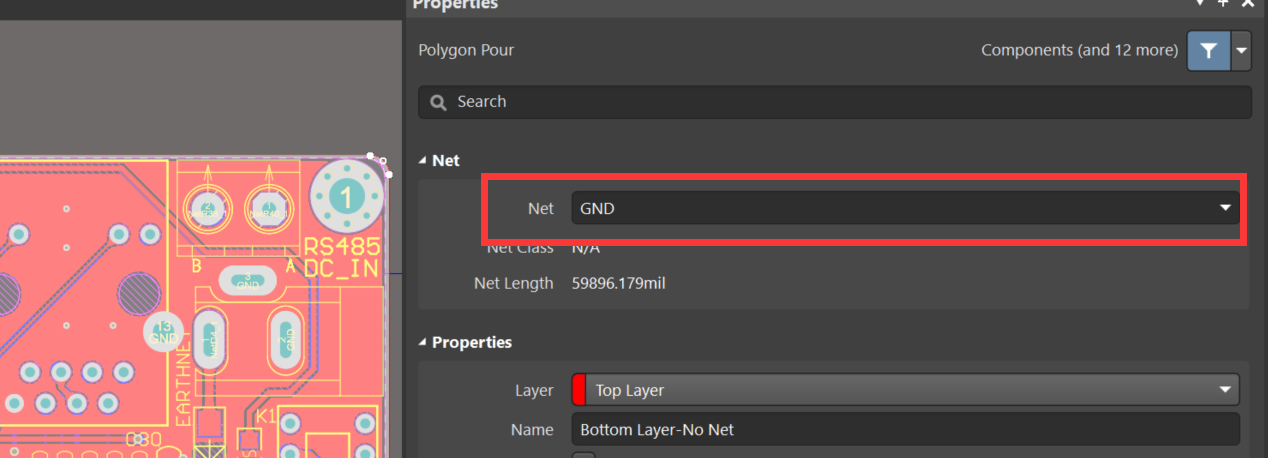

#Altium designer 18 铺铜变绿 license

Fast R-CNN is implemented in Python and C++ (using Caffe) and is available under the open-source MIT License at https: ///rbgirshick/fast-rcnn. Compared to SPPnet, Fast R-CNN trains VGG16 3× faster, tests 10× faster, and is more accurate. Fast R-CNN trains the very deep VGG16 network 9× faster than R-CNN, is 213× faster at test-time, and achieves a higher mAP on PASCAL VOC 2012. Compared to previous work, Fast R-CNN employs several innovations to improve training and testing speed while also increasing detection accuracy. Fast R-CNN builds on previous work to efficiently classify object proposals using deep convolutional networks. 目标检测经典论文翻译汇总: 翻译pdf文件下载: 此版为纯中文版,中英文对照版请稳步: Fast R-CNN Ross Girshick Microsoft Research(微软研究院) paper proposes a Fast Region-based Convolutional Network method (Fast R-CNN) for object detection.